The Logarithmic Engineer

The Logarithmic Engineer

There was an assumption embedded in the software era:

If you wanted to build more, you hired more engineers. Scope expanded, headcount followed. It wasn’t perfect, but it held well enough to build the largest companies in history. Well, that assumption is now breaking. Not gradually, but in real time, and what replaces it is not linear, it is logarithmic.

The Break

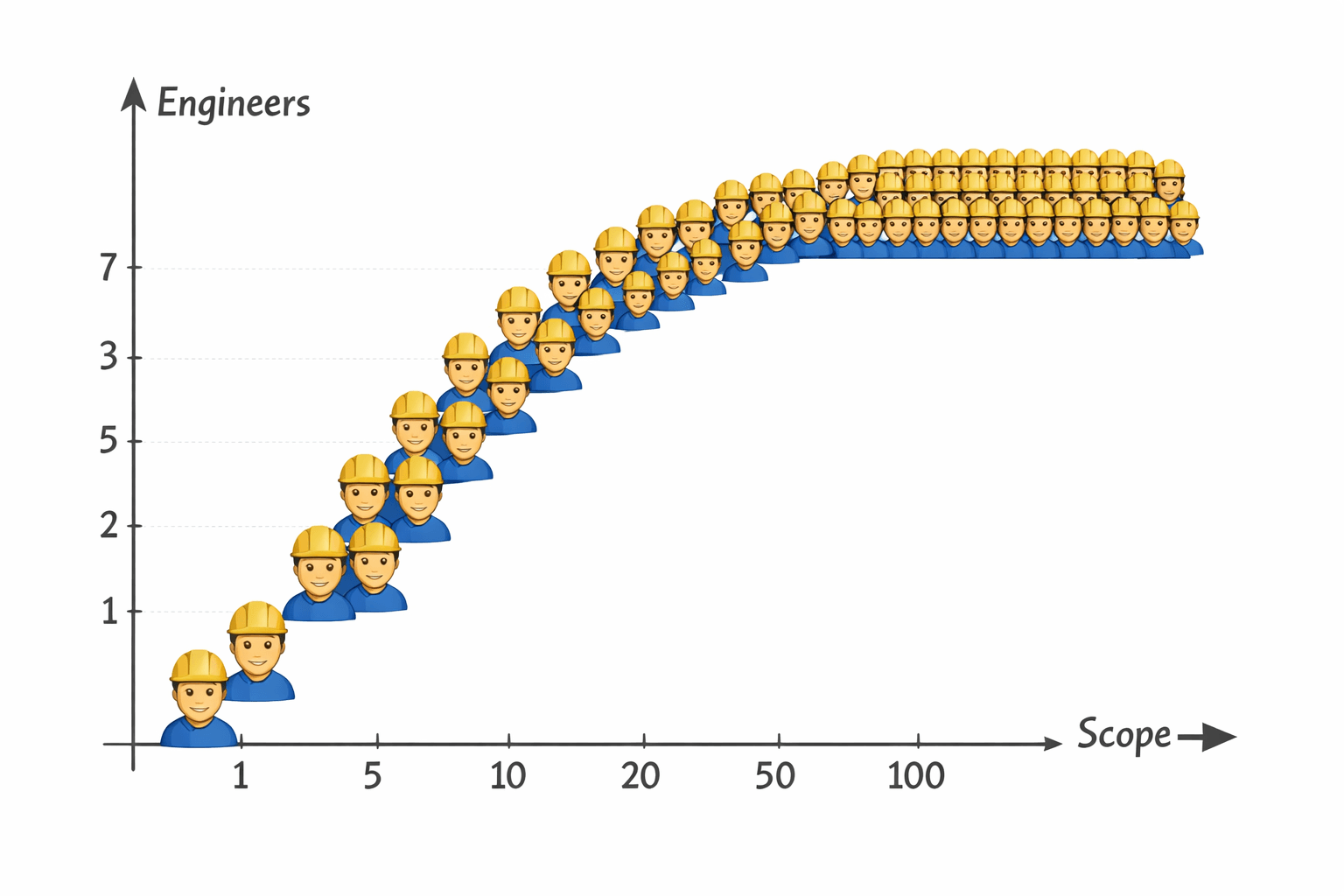

In the pre-AI model, the relationship between scope and labor looked roughly like this:

E ∝ S

Where:

E = number of engineers

S = system scope, features, integrations, markets

In practice, it softened slightly due to reuse:

E = α · S^β, where β ≈ 0.7–1.0

But the intuition held, more surface area required more humans, because humans were doing both:

- 1. planning the system

- 2. executing the system

But that coupling is now gone.

The Separation of Layers

AI introduces a clean separation that didn't exist before:

Execution layer → scales with compute

Planning layer → remains human-constrained

Execution is now:

Execution ∝ C

Where C is compute, agents, inference, automation depth.

Meaning the act of building, integrating, testing, and even debugging is increasingly handled by systems that scale horizontally without adding people.

So what remains for humans?

Planning.

The True Bottleneck

Planning is not the enumeration of tasks. It is the shaping of systems:

- 1. defining abstractions

- 2. resolving constraints

- 3. deciding what should exist at all

And critically, planning does not scale linearly with scope, because systems compress. A hundred features do not require a hundred independent plans. They collapse into shared primitives, interfaces, and patterns.

So instead of:

P ∝ S

We get something closer to:

P ∝ log(S)

Where:

P = planning complexity

This is not exact, but directionally correct and increasingly observable.

The Logarithmic Engineer

If engineers are primarily anchored to the planning layer:

E ∝ P

And:

P ∝ log(S)

Then:

E ∝ log(S)

This is the crucial, structural shift: system scope can grow exponentially while engineering headcount grows logarithmically.

Market Expansion Without Headcount Expansion

Now introduce market segmentation.

Let:

M = number of markets, customer types, or verticals

Each new market adds variation, but not a full system rebuild.

So:

S ≈ S₀ · M

Then:

E ∝ log(S₀ · M)

Which simplifies to:

E ∝ log(M) + constant

Meaning:

Going from 1 market to 10 markets is a step

Going from 10 to 100 is another step, but each step is smaller than intuition expects. You do not double engineers when you double reach, you increment them.

Why This Actually Works

This only holds because three deep changes have occurred:

- - Execution has become elastic

Agents absorb implementation work, scaling with compute rather than labor - - Variability collapses into configuration

Differences between markets become prompts, schemas, and constraints, not codebases - - Systems are increasingly modular

Once primitives exist, expansion is recombination, not invention

The result is that most “new work” is no longer work in the traditional sense, it's selection.

The Constraint That Remains

There is still a ceiling, but it's no longer execution capacity, it's coherence. Historically, organizations broke because communication scaled poorly, roughly:

Coordination cost ∝ n²

Now, with agents absorbing execution, the constraint shifts upward:

E_max ≈ f(human cognitive bandwidth)

And that function grows very slowly... in some cases, it is effectively constant.

The Dangerous Temptation

At this point, there is an obvious next step:

If execution has been automated, why not automate planning as well? Why not let agents define the architecture, set the objectives and determine the direction? Technically, this is already possible in constrained domains, but this is where the system breaks in a non-obvious way. Planning is not just optimization, planning is alignment.

The Anchor

The planning layer is where intent enters the system, it's where values are encoded, tradeoffs are chosen and direction is set. If execution is handed to machines, nothing fundamental is lost. However, if planning is handed over, something irreversible is lost, because then:

Direction ∝ model objective functions

Not human intent, and those are not the same thing. Even if they appear aligned locally, they drift globally. So while the equation holds:

E ∝ log(S)

There is an implicit constraint beneath it:

E must remain anchored to P, and P must remain human-owned

The Economic Through Line

This connects directly to the broader economic model we explored previously. We described a system where output scales with participants:

Output ∝ N^α, where α > 1

Now consider what happens when “participants” can be manufactured:

- - AI agents

- - autonomous systems

- - humanoid robots

And their productive capacity scales with compute:

Output ∝ C

While human labor scales as:

E ∝ log(S)

You get a divergence:

The numerator grows exponentially but the denominator grows logarithmically, which means:

Output per human → asymptotically explodes

Not linearly, and not exponentially, but at a rate that becomes difficult to intuit.

The New Role of the Engineer

In this world, the engineer is no longer the builder of systems or the writer of every component. They become the definer of boundaries, the curator of abstractions, and the anchor of intent. A small number of humans shaping systems that expand far beyond their direct contribution. The industrial era scaled with labor, the software era scaled with developers, and the AI era scales with compute and we know that already. The role of the engineer compresses into something far more critical and far more fragile: a logarithmic constraint on an otherwise exponential system.

Remove that constraint, and the system still grows. But it no longer grows in a direction we chose. Aligned growth is here, but the specter of unaligned growth towers over humanity.